A few weeks ago a Dell XPS 13 Developer Edition arrived on my doorstep.

A few weeks ago a Dell XPS 13 Developer Edition arrived on my doorstep.

Wait, what? I thought you were a Mac guy

I’ve been using a 15-inch MacBook Pro as my daily machine for ten years, but before that I was on a Dell running Ubuntu for a few years and I’m on Linux servers every day.

I’ve been looking for a smaller machine to use as a secondary/backup laptop. I thought about getting a new 13-inch MacBook Pro, but it sounds like Apple may refresh the line-up later this year or next year, so I decided to wait and then maybe replace my 15-inch with something from the new line-up, assuming they don’t do something stupid (I’m looking at you, Touch Bar).

I thought about a Chromebook, but that seemed too limiting. I know developers have been successful on high-end Chromebooks, especially if they are able to use cloud-based IDE’s, and there was a recent announcement about running Linux apps on Chrome OS, but I honestly would rather just run Linux.

I decided to get a Laptop that ships with Linux. Sure, I could buy any laptop that has good compatibility feedback and just install it myself, but I figured, why not let someone else do that work for me. I quickly narrowed down the list to System76 Galago Pro and Dell XPS 13 Developer Edition (model 9370). After reading lots of reviews I decided to go for the Dell. I like System76 as a company, and I hope to buy some other hardware from them in the future, but the XPS 13 gets lots of great reviews in terms of build quality which is ultimately why I decided on the Dell.

This is a secondary machine, so I could have skimped on the specs to save some dough. Instead, I decided to provision the new laptop as if it were my primary dev machine to make it as useful as possible as a backup and roadie. If it’s anemic, I won’t use it, and the whole idea is to actually use the thing. When Apple eventually refreshes the MBP lineup, I want to make a good decision about whether to stay the course with MacBook Pro or make the switch back to Linux full-time.

Impressions after three weeks of occasional use

The Dell XPS 13 has an aluminum top and bottom with a carbon fiber deck. I was worried about the case not being all-aluminum, but that was unfounded–this is a solid machine. It’s small and light but has a sturdy, well-put together feel. The aluminum case and the black carbon fiber look pretty sweet. I can’t get over how thin it is.

I’m not quite used to the keyboard yet–I feel like I’m stretching to get to the left ctrl key and I consistently hit PgDn when I mean to hit the right arrow. The keys have more travel than I thought they would based on the thin case, which is good.

The trackpad is much better than I thought it would be–I had come to believe that only Apple could do trackpads right. I was wrong. The Dell trackpad is a pleasure to use. I will say that with its large size relative to the small deck and my big mitts, I’ve inadvertently palm-touched it more than once.

While researching the machine I saw several mentions of the thin bezel around the display, but I didn’t pay much attention to what seemed to me like an insignificant detail. That was until I opened the lid and fired it up. The display is simply gorgeous. I can’t stop looking at it, it’s so big, bright, and crisp. Comparing it to my daughter’s 13-inch MacBook Pro, I now get the bezel thing. The XPS 13 is basically wall-to-wall display.

I’ve always associated small laptops with lackluster performance, but, damn, this thing is super snappy. It’s got an Intel I7 8th Gen CPU with 8 cores that runs up to 4.0 GHz. Mine has 16 GB of LPDDR3 2133 MHz RAM and a 500 GB SSD. That’s a lot of punch to be packing in this small package. I’ve had the fan kick on a few times, like when I’m running several Docker containers, but most of the time it’s quiet.

There have been reports of “coil whine”–a high-pitched noise often associated with video cards–on these machines, but I have yet to hear it.

I haven’t given the battery a fair shake yet–the specs claim 19 hours, which seems like a stretch based on what I’ve seen so far. The charging adapter is tiny which I’ll really appreciate when I take it on the road.

This year’s model has two USB-C ports, a card reader, and a headphone jack which is plenty for my needs.

Enough hardware, let’s talk software

The XPS 13 Developer Edition ships with Ubuntu 16.04 LTS. I use Linux all of the time, so I wasn’t worried about being productive immediately on the OS. I suspect that developers considering a move from MacOS to Ubuntu (or other Linux distributions) would also find the transition easy, even if they rarely use Linux because their shared Unix heritage makes them very similar, especially from a command line perspective.

My biggest worry was VPN. It seems like every one of my clients uses a different VPN setup. Many of my clients already struggle to get my Mac connected, so I had low hopes for the Ubuntu machine. The results have been mixed–I was able to use a combination of OpenConnect and Shrew Soft VPN to get connected to most of my clients from the machine. Not all of them are working quite yet. (Ironically, connecting to VPNs based on Dell SonicWall have given me the most trouble).

Other than VPN, getting everything else set up was smooth. I’ve had no problems installing the most critical software: Firefox, IntelliJ IDEA, Atom, VirtualBox, Docker, Pidgin, Retext, Java, Maven, and NodeJS all installed without incident.

I thought for a second I wouldn’t be able to use my Yubikey because of the USB-C ports, but Dell ships an adapter and that worked great, as did the Yubikey GUI.

I’m super happy with the machine so far–it’s going to meet my requirements quite well. Whether or not I come full-circle and return to Linux as my primary daily development machine OS will depend on my eventual success with VPN as well as what Apple decides to do with the MacBook Pro line-up. Until then, I’m enjoying this little beast.

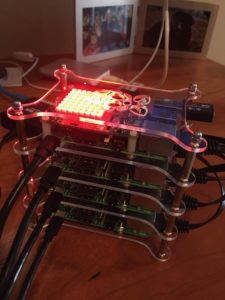

I love virtual machines and containers because they make it easy to isolate the applications and dependencies I’m using for a particular project. Tools like Docker, Virtualbox, and vagrant are indispensable for most of my projects and I’m still using those, but in this post I’ll describe a product called Antsle which has given me additional flexibility and has freed up some local resources.

I love virtual machines and containers because they make it easy to isolate the applications and dependencies I’m using for a particular project. Tools like Docker, Virtualbox, and vagrant are indispensable for most of my projects and I’m still using those, but in this post I’ll describe a product called Antsle which has given me additional flexibility and has freed up some local resources.