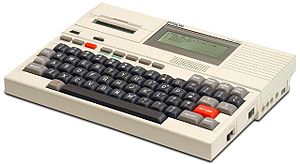

This little beauty is an Epson HX-20. It’s one of the first laptop computers. What makes it special to me is that its 4 line LCD screen is where I took my first coding steps in the early 1980’s.

This little beauty is an Epson HX-20. It’s one of the first laptop computers. What makes it special to me is that its 4 line LCD screen is where I took my first coding steps in the early 1980’s.

My Dad was an IT guy. He’d bring home the Epson so he could work remotely. I’d been to his office before and had spent hours playing hide-and-seek in the data center with my sister or hucking write protect rings from mag tapes across the room like frisbees. To this day a raised floor and the smell of chilled, vaguely plastic, air brings back fond memories. But what he did all day at work was completely abstract to me until he brought that little Epson home.

Learning those first few lines of BASIC removed a lot of the mystery about how computers worked. It was clear I could tell the computer to do anything I wanted and it would follow those instructions to the letter. I quickly caught the bug and eventually got my own computer (We were a staunch Atari family–sorry Commodore fans!).

Fast forward 30 years…

Now I work in software and my own son is learning to code. When he was 12 or so he was running Linux on his laptop. Then he became interested in coding so I taught him Python. He has since picked up JavaScript, some HTML/CSS, and is now learning Java in high school.

When I think about his experience learning to code compared to mine, there are some similarities:

- Exposure. He has access to various devices (laptops, PCs, Macs, tablets) and operating systems at home and at school.

- Curiosity. More than using these devices for entertainment, he’s curious about how they work. I see plenty of kids with their faces buried in a tablet for hours on end. But if they don’t care to know how those apps or that game or that web site came to be they may not be ready to dive into coding.

- Encouragement. What’s great is that not only does he have his Dad and Grandpa encouraging him to dive in, he’s got lots of teachers who understand the importance of code. (More on the curriculum in a minute).

So those are the ingredients that were the same for both of us. What’s different for this generation of kids learning to code? A lot, and it’s not just about ever-increasing computing power at lower and lower prices.

There are a ton of great resources kids have to help them learn to code these days. Let me call out some of them. I’ll put them in three buckets: Tools, Curriculum, and Communities.

Tools

When I learned to code the tools were virtually non-existent. I had a computer, the BASIC programming language, and a reference manual. Now there are all kinds of freely-available, cross-platform tools that are great for teaching kids to code. Here are a few.

For many kids, Lego Mindstorms is a great entry point into coding. This is coding that feels like play. Mindstorms comes with a drag-and-drop programming app that you use to build apps as if you were assembling building blocks. The app is then downloaded to the robot and boom–the kid sees their own creation obeying their commands.

If you don’t like the drag-and-drop IDE there are several traditional coding environments for a variety of languages that work with Mindstorms.

Scratch was the first coding tool my son was exposed to in school. Hosted at MIT, Scratch was purpose-built for teaching kids to code. Its graphical interface is similar to Mindstorms in some respects.

One of the things I love about Scratch is that kids can share their projects and use the projects of others as a starting point for their own creations. It’s never too early to start teaching kids about open source-style collaboration!

Alice is a story-telling tool. Kids manipulate 3D objects on a canvas. By giving instructions to the objects in the virtual world, kids learn the basics of object oriented programming.

Jeroo is another tool that teaches kids programming by manipulating objects in a virtual world. In this one you have an island. On the island are these mammals–Jeroos–who like to eat flowers. Coding lessons involve instantiating instances of Jeroos and getting them to eat instances of flowers.

Whereas Scratch, Alice, and Jeroo are similar to multiple object-oriented langauges, Greenfoot is about teaching kids a specific language: Java. Working in Greenfoot students write standard Java to build all kinds of programs. It’s a nice tool for learning Java before moving into a full-fledged IDE.

The RaspberryPi is a tiny computer and a code-learning tool all wrapped into one for $25 – $35. About the size of a credit card, you plug this little guy into a keyboard, mouse, and monitor and you’ve got yourself a Linux box with 512 MB of RAM that’s ready for code. Python, Java, C, C++, Ruby, Scratch are all installed by default.

Curriculum

I grew up in a small rural town. In the mid-80’s, my high school computer science curriculum consisted of a year of typing (on these things called typewriters) and a year of programming. The programming class was divided into a semester of typing and 10-keying (this time, on a computer) and a semester of BASIC.

In our school system programming starts fairly early in 7th grade using tools that don’t really feel like coding and then is offered every year, gradually getting more complex throughout high school.

Here is the curriculum by grade. I’ll include rough age equivalents so my readers outside the U.S. can map it to their grade levels:

- 7th Grade (~13 years old): Technology, Tools: HTML, Scratch, Alice

- 8th Grade (~14 years old): Principles of Communication in Business & Technology, Tools: HTML, Scratch, Alice

- 8th Grade (~14 years old): Engineering, Tools: Google Sketch-Up, Lego MindStorms, Vex Robotics

- 9th Grade (~15 years old): Pre-AP Computer Science, Tools: Scratch, Alice, Jeroo, Greenfoot (Java)

- 10th Grade (~16 years old): AP Computer Science, Tools: Eclipse (Java)

Unfortunately, that’s it. Nothing is offered beyond AP Computer Science.

Community

When I was a kid there was no Internet, CompuServe was out of my financial reach, and Bulletin Board Systems were just taking off. That meant learning to code (especially in a small town) was largely a solitary pursuit.

With ubiquitous network connectivity, today’s kids have instant access to a globally connected community of learning resources, mentors, and others who are also learning to code. Here are a few examples of resources and communities my son has found helpful:

- Codecademy is a community of educators and students. There are courses on Python, JavaScript, Ruby, HTML, and others, all available for free.

- Khan Academy is another free learning community that offers computer programming.

- Once kids know a little bit about programming, if they are interested in learning Python, my son found Google’s Python Class very useful.

The Role of Open Source in Teaching Kids to Code

Open source software plays a huge role in teaching kids to code. All of the tools I’ve mentioned in this blog post are open source and freely-available. But, more importantly, kids can dig into the hundreds of thousands of open source projects that are out there to see how they work and, eventually, to participate in those projects by writing documentation, helping with QA, and coding bug fixes. Open source doesn’t care how old you are. Mozilla, in particular, has been a wonderful and supportive project that my son has been participating in.

One of the ways high school students can get introduced to open source is through the Google Code-In. Each Fall various open source projects create a bunch of tasks they need done. Students participating in the Code-In work on these tasks. Tasks might be things like writing documentation, creating unit tests, working on a web site, you name it.

At the end of the Code-In, the open source projects select the students who made the most and best contributions and those students (and a parent) are flown to San Francisco for a tour of the Googleplex.

Encourage Your Kid and/or Somebody Else’s Kid

Today kids are exposed to computing nearly all day, every day. A small percentage of them will be curious enough to want to learn how it works. You can use some of the tools and resources I’ve listed here to encourage them to dive in and you can get them plugged in to open source and code-learning communities on the Internet so they can dig deeper on their own.

The hardware, software, and network have changed a lot since I was a kid, but one thing hasn’t changed: A wonderful world of discovery and opportunity awaits for young coders.

This week, December 9 – December 15, is Computer Science Education Week. They are encouraging people to spend an hour teaching someone to code. They’ve got tutorials ready to go. All they need is your time!

What tools, resources, or communities has your kids leveraged as they’ve learned to code? How does your local high school’s computer science curriculum differ from this one? Let me know!